Autonomous Decision and Control Laboratory

-

Algorithmic Contributions

- Scalable algorithms for partially observable Markov decision processes (POMDPs)

- Motion planning with safety guarantees

- Game theoretic algorithms

-

Theoretical Contributions

- Particle POMDP approximation bounds

-

Applications

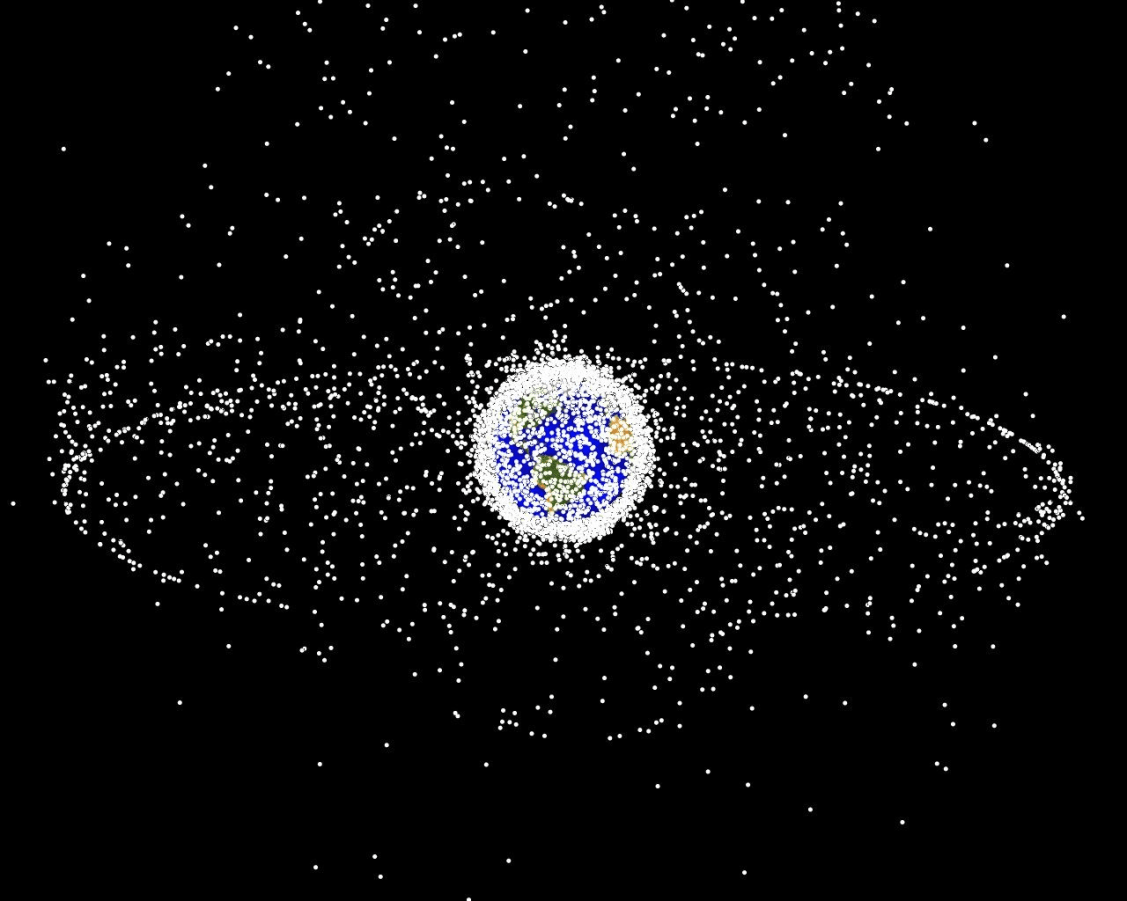

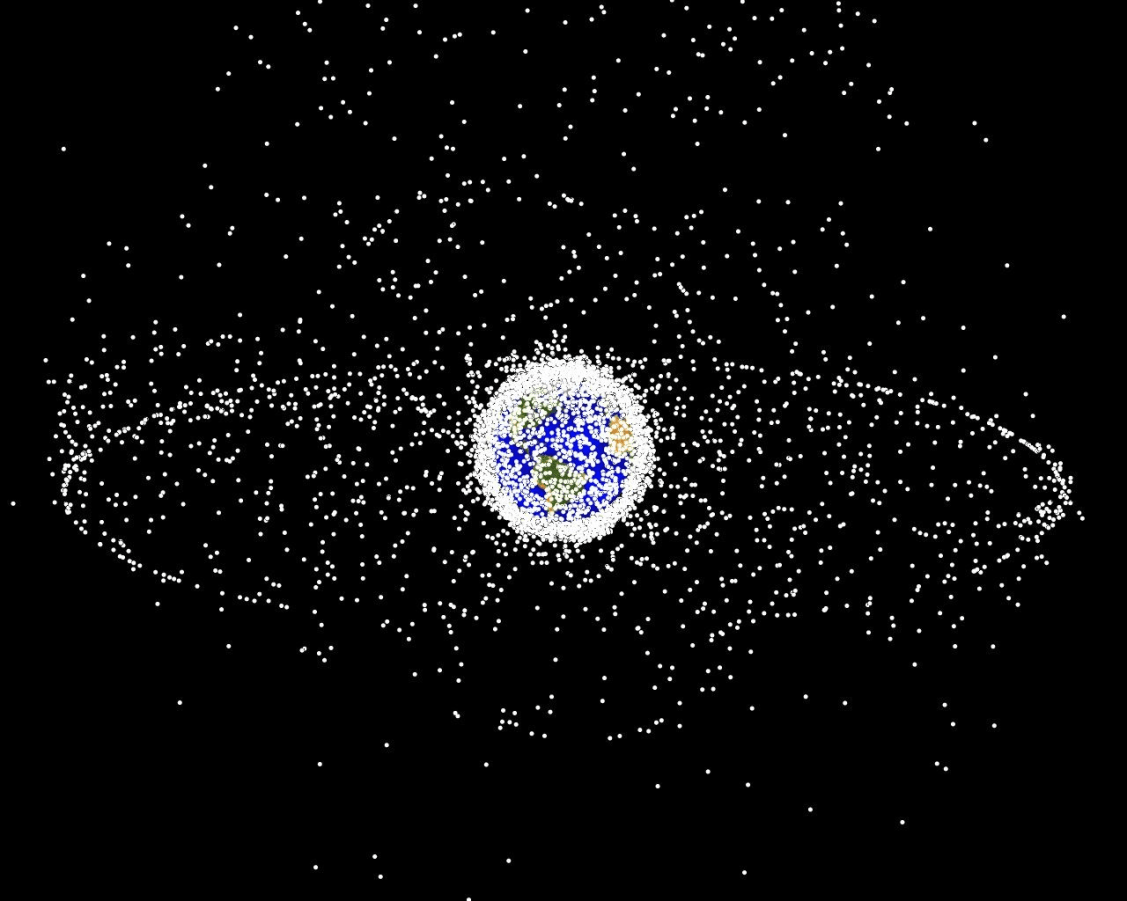

- Space Domain Awareness

- Autonomous Driving

- Autonomous Aerial Scientific Missions

- Search and Rescue

- Space Exploration

- Ecology

-

Open Source Software

- POMDPs.jl Julia ecosystem

PI: Prof. Zachary Sunberg

PhD Students

Postdoc

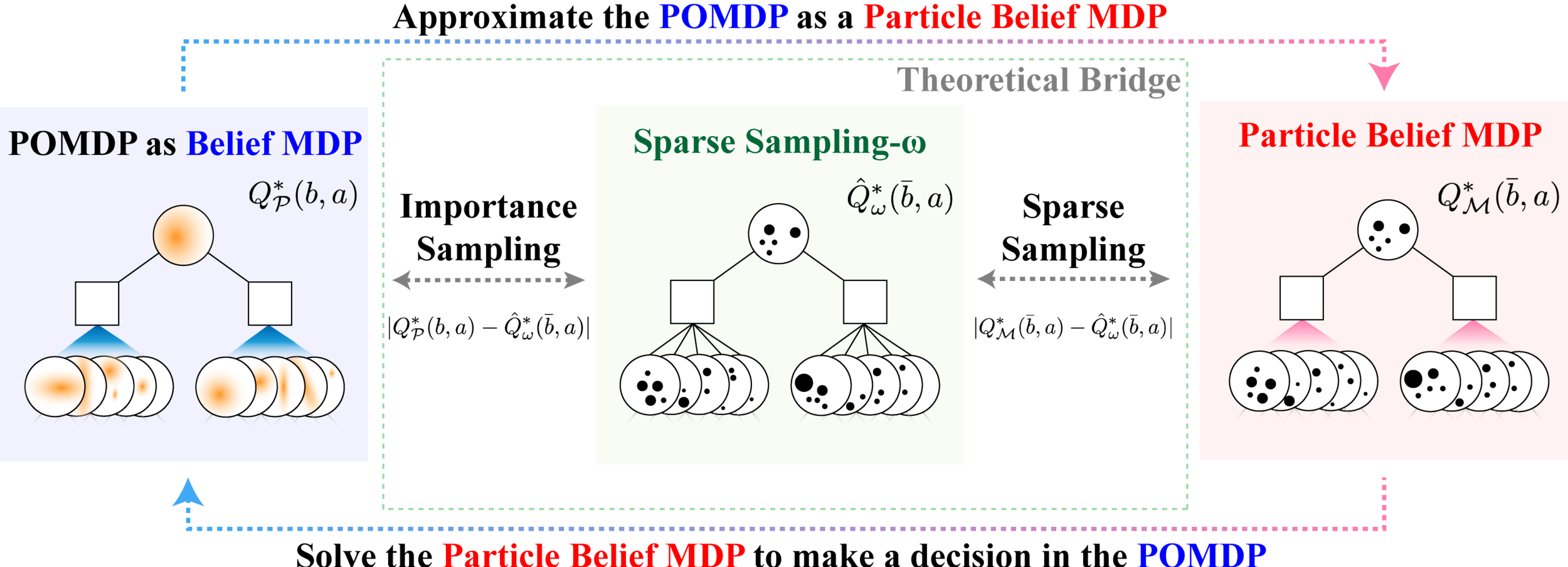

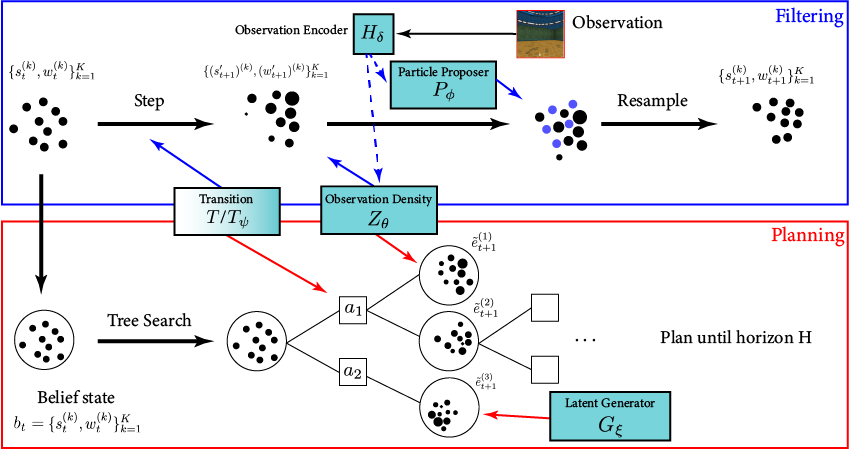

POMDP Algorithms

Machine learning components handle high-dimensional observations (e.g. images)

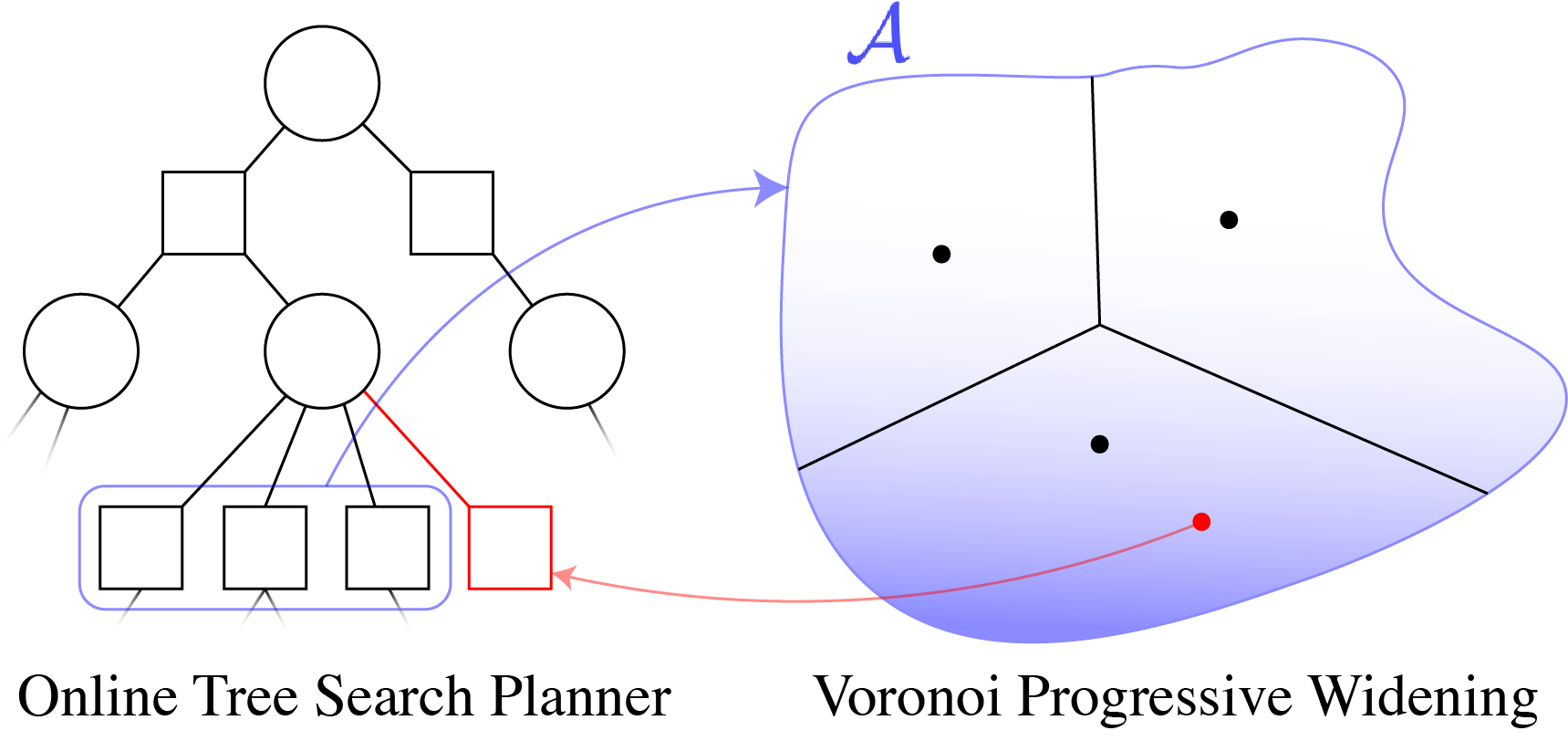

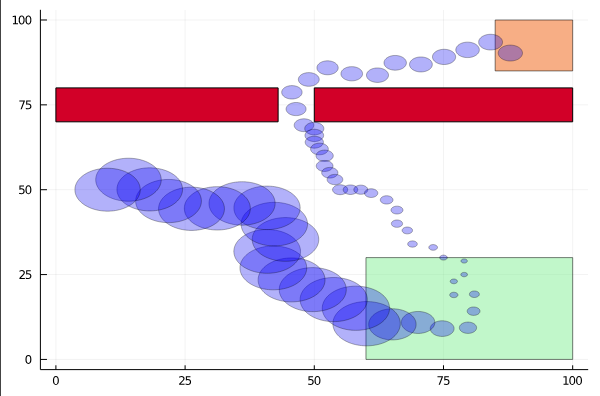

The ADCL's tree search algorithms solve realistic problems with continuous state, action and observation spaces.

The ADCL is a world leader in the development of scalable online algorithms for partially observable Markov decision processes (POMDPs)

ADCL Members developed the first general analytical bounds on particle belief approximation error, indicating that algorithms with complexity independent of the state and observation space size are possible.

See cu-adcl.org/publications for related peer-reviewed and submitted papers.

BOMCP

Voronoi Progressive Widening

POMCPOW

Applications

The algorithms that the ADCL develops are very general and effective in a wide range of applications including the following:

See cu-adcl.org/publications for related peer-reviewed and submitted papers.

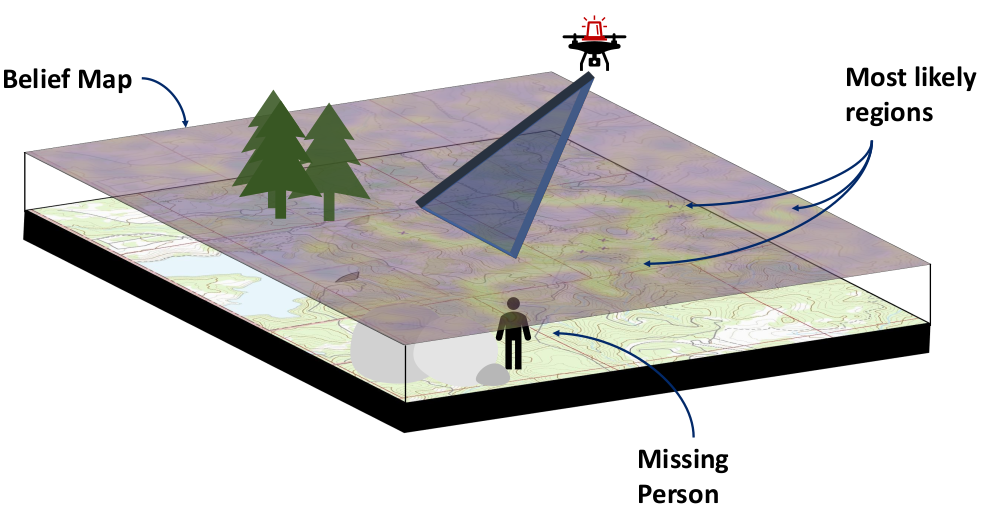

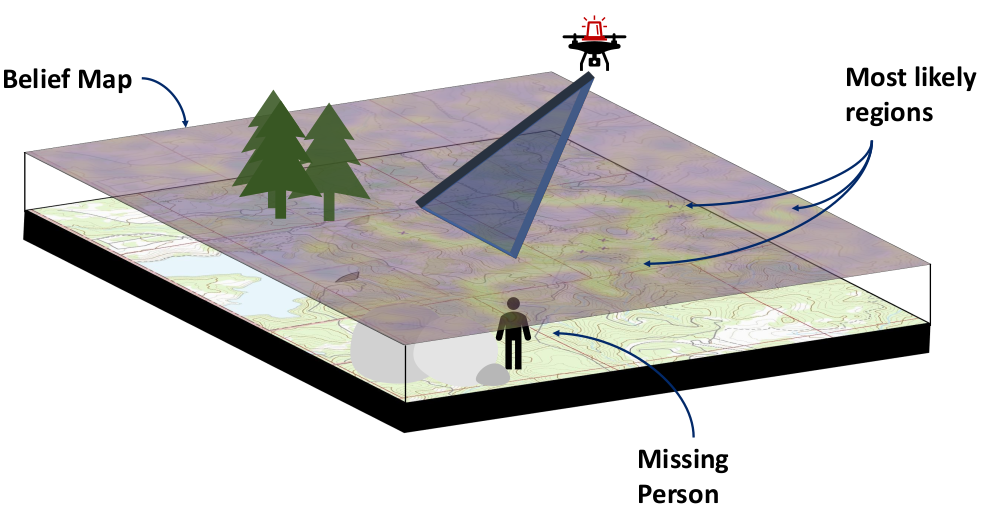

Search and Rescue

- In collaboration with the COHRINT lab, we are developing a planner to automatically search an area for a lost or injured hiker with a multirotor aircraft.

Storm Science

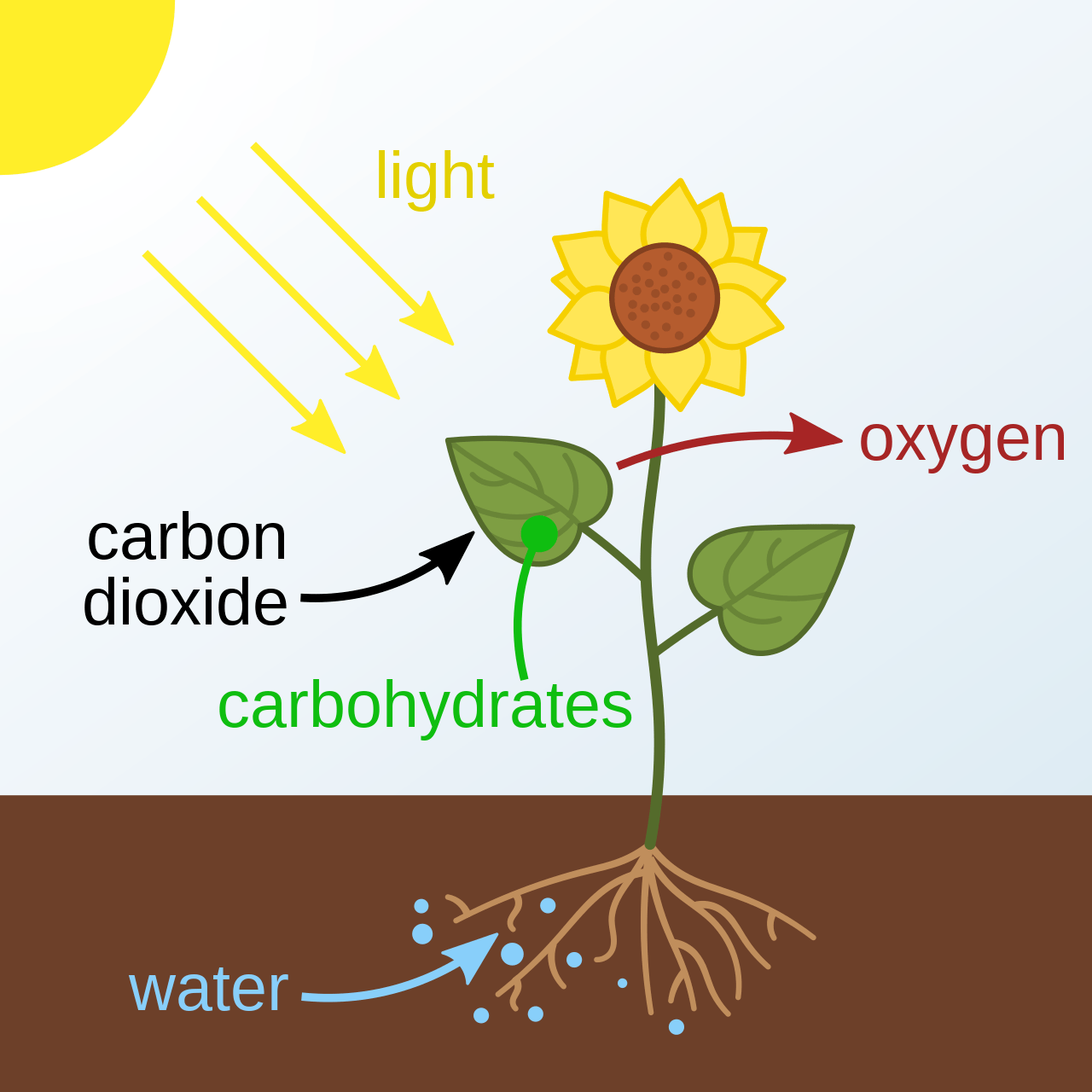

- In collaboration with other RECUV researchers, we are developing algorithms for drones equipped with sensors to find paths within a storm that gather the most informative data.

Planetary Exploration

- In collaboration with NASA's jet propulsion lab, we are developing algorithms for safe autonomy for planetary exploration rovers.

Autonomous Driving

- Our algorithms can help autonomous vehicles safely interact with other road users such as pedestrians or human-driven cars.

Space Domain Awareness

- We have developed game-theoretic algorithms for deception-robust sensor tasking (next slide)

Explainable Decision Support

- In collaboration with the Johns Hopkins University Applied Physics Lab, we are developing methods for AI decision support algorithms to explain their conclusions and better understand what human operators want.

Ecology

- In collaboration with the University of California Berkeley, we have developed algorithms to plan rebuilding of ecosystems by adding key species sequentially.

Robotic Motion Planning

- In collaboration with other RECUV researchers, we have developed algorithms for safe motion planning under uncertainty.

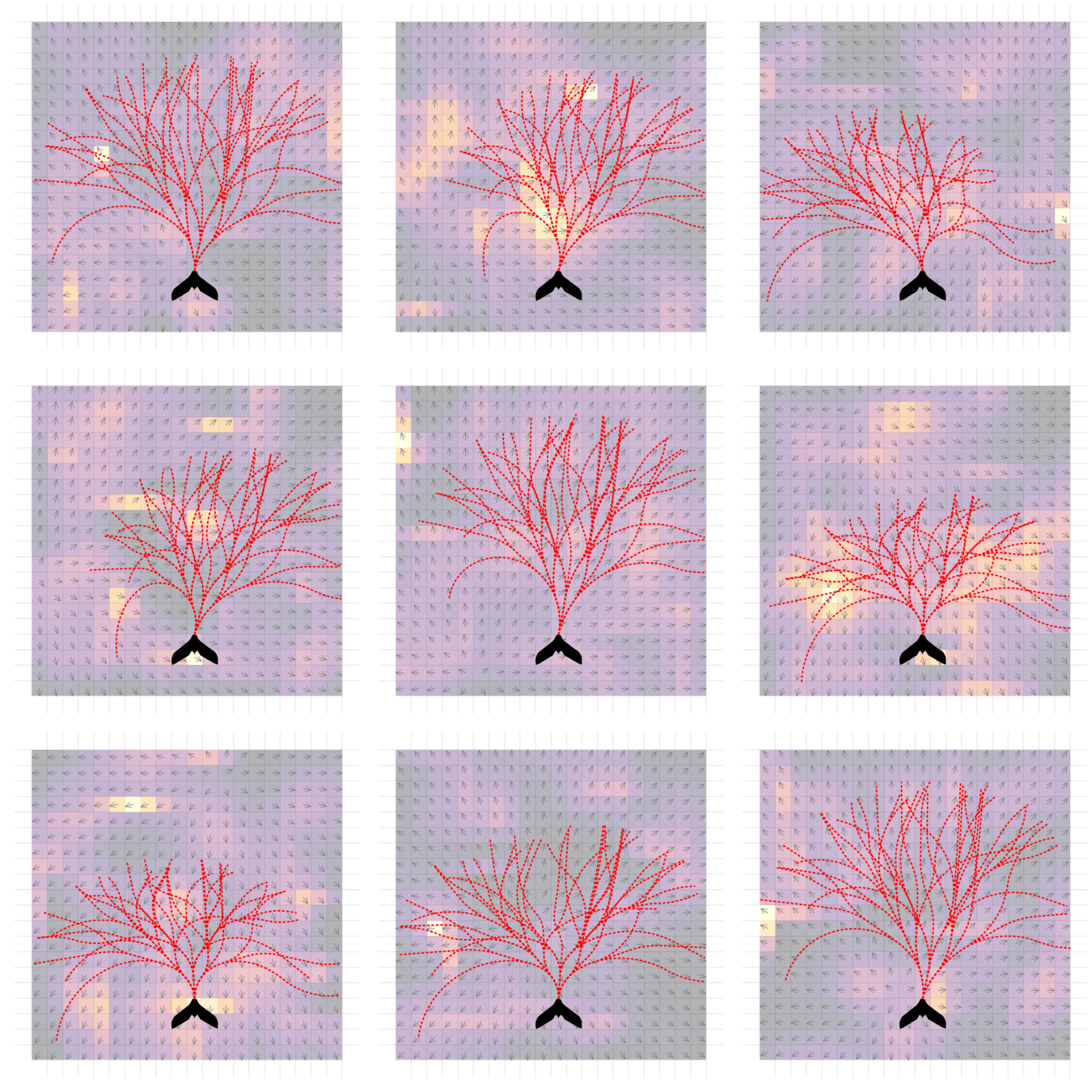

Game Theory

A new application area for the ADCL is game theory. When rational agents have different goals, optimization can no longer represent their behavior. Instead, game theory provides a mathematical framework for reasoning about how rational agents interact.

- In partially-observable domains, agents with opposing goals may try to outsmart each other by acting unpredictably. This type of behavior is captured in the game theoretical concept of a mixed Nash equilibrium.

- Algorithms for calculating mixed Nash equilibria are mostly limited to tabletop games such as Poker - new algorithms are needed to find these solutions in physical problems.

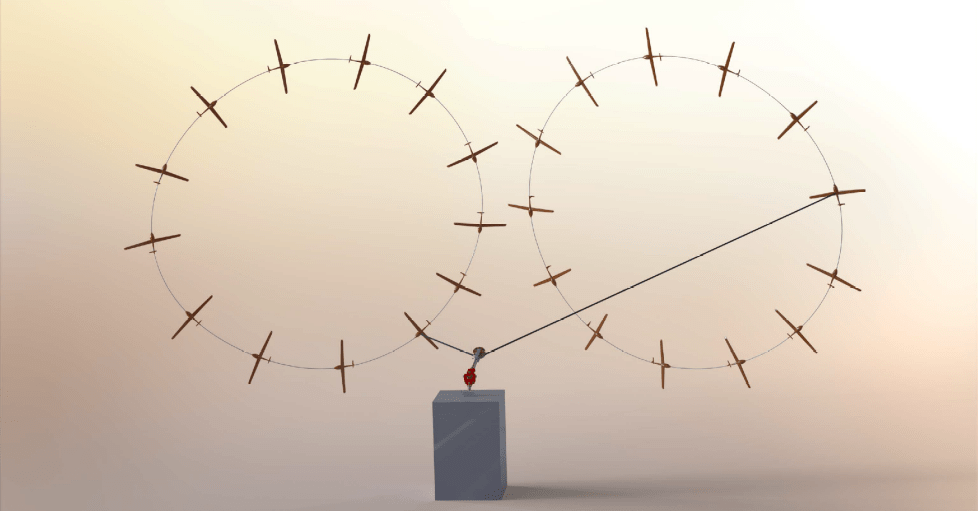

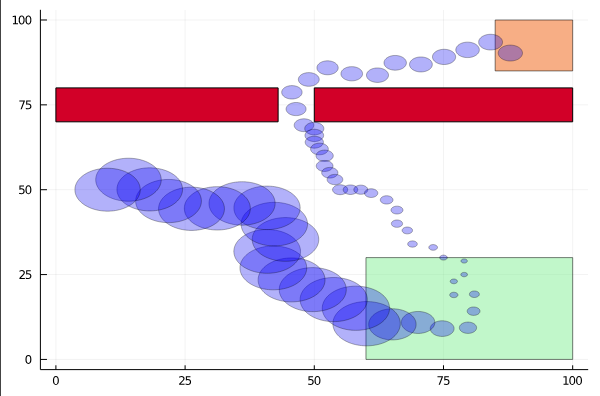

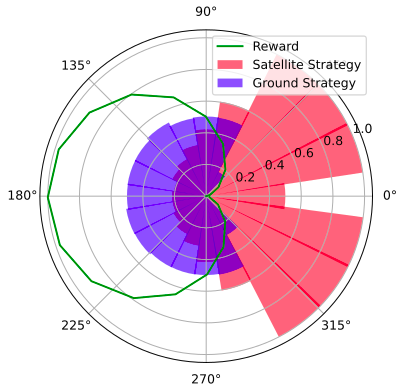

- ADCL members have applied this theory to a space domain awareness game to calculate stochastic surveillance strategies (in the figure at left) that deter a satellite from taking action in a sensitive region (green) by increasing the probability of detection.

See cu-adcl.org/publications for related peer-reviewed and submitted papers.